This is the fourth post in a series. it may be A Good Idea to take a look at the earlier posts as well…You Have Been Warned!

All substantial applications require management and monitoring; in the Java world, JMX is the standard technology.

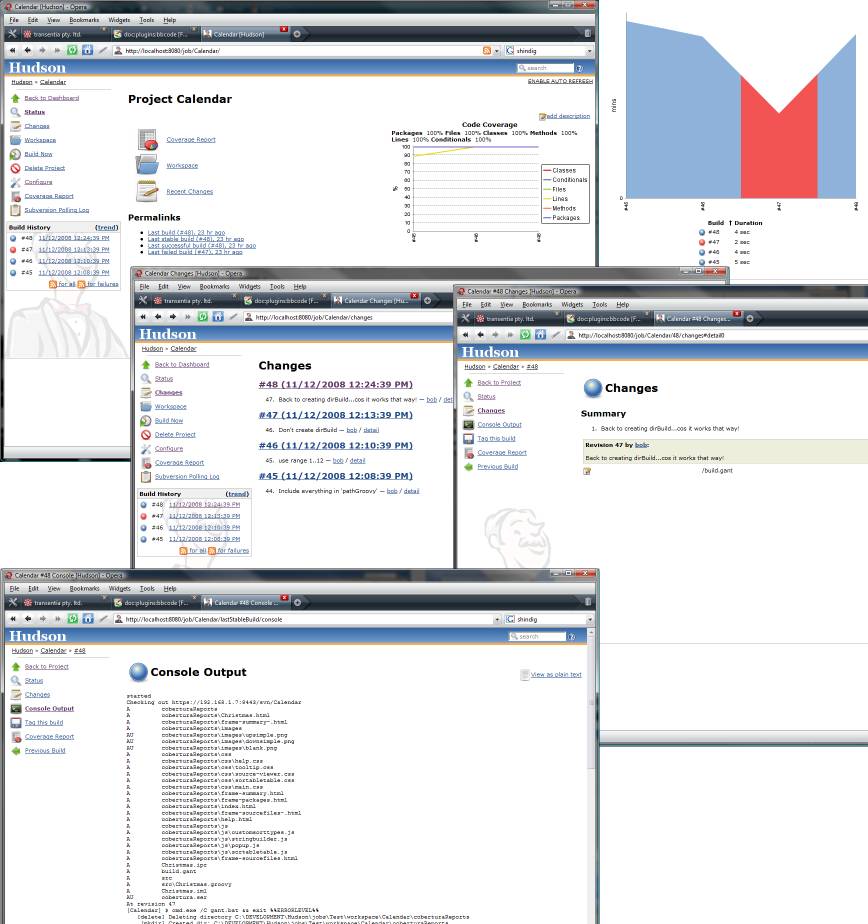

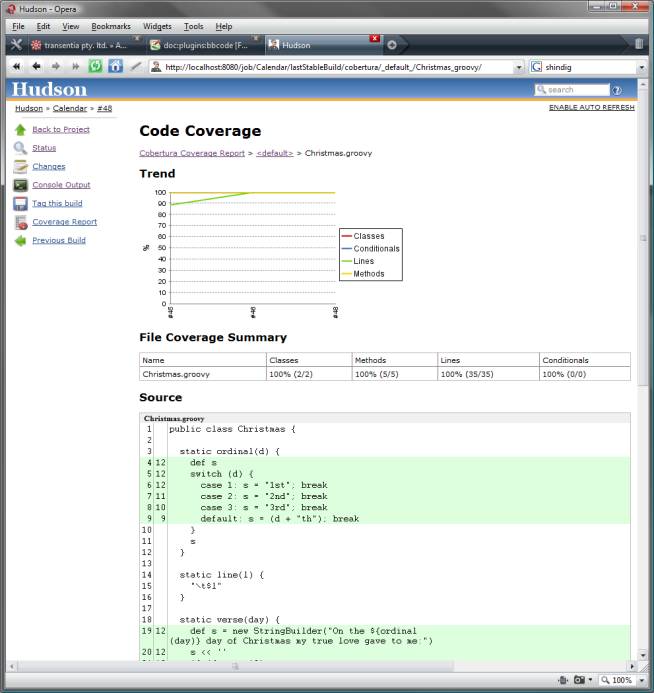

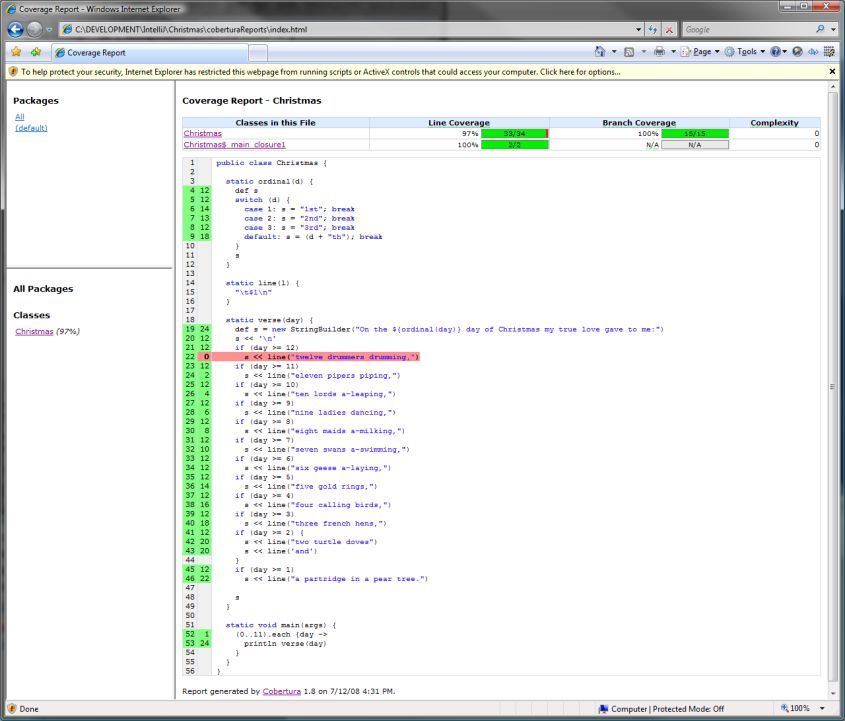

I have instrumented the Calc WebFlow to maintain two JMX-capable counters (MBeans): total number of flows created since application startup and instantaneous count of flows actually active. Both of these counters (actually, two instances of the same class) are injected into the controller, as this excerpt shows:

class CalcController {

def totalFlowsCreatedSequenceMBean

def instantaneousFlowCountMBean

def calcFlow = {

startup {

action() {

flow.flowCreatedSequence = totalFlowsCreatedSequenceMBean.increment()

log.debug "calcFlow startup; this is flow #${flow.flowCreatedSequence}; instantaneous flow count: ${instantaneousFlowCountMBean.increment()}"

}

on('success').to 'init'

}

shutdown {

action() {

log.debug "calcFlow shutdown; this is flow #${flow.flowCreatedSequence}; instantaneous flow count: ${instantaneousFlowCountMBean.decrement()}"

}

on('success').to 'results'

}

}

Although there is a Grails plugin for JMX and a Groovy JMX DSL and a GroovyMBean class, configuring JMX is a fairly trivial task, so I'm going to do it "by hand."

In Grails, standard spring-oriented configuration is done using the Spring Beans DSL in the file conf/resources.groovy:

import org.springframework.jmx.support.MBeanServerFactoryBean

import org.springframework.jmx.export.MBeanExporter

import org.springframework.jmx.export.annotation.AnnotationJmxAttributeSource

import org.springframework.jmx.export.assembler.MetadataMBeanInfoAssembler

// Place your Spring DSL code here

beans = {

// application-level counters

totalFlowsCreatedSequenceMBean(calc.CounterMBean)

instantaneousFlowCountMBean(calc.CounterMBean)

// JMX infrastructure configuration

mbeanServer(MBeanServerFactoryBean) {

locateExistingServerIfPossible = true

}

attributeSrc(AnnotationJmxAttributeSource)

assemblr(MetadataMBeanInfoAssembler) {

attributeSource = attributeSrc

}

exporter(MBeanExporter) {

server = mbeanServer

assembler = assemblr

autodetect = true

beans = ["calc.jmx:counter=totalFlowsCreatedSequenceMBean": totalFlowsCreatedSequenceMBean,

"calc.jmx:counter=instantaneousFlowCountMBean": instantaneousFlowCountMBean]

}

}

You should be able to see how the 'totalFlowsCreatedSequenceMBean' and 'instantaneousFlowCountMBean' bean instances are created by the underlying Spring infrastructure and then injected into the Calc controller (this very powerful behaviour is "autowiring by name", in Spring parlance).

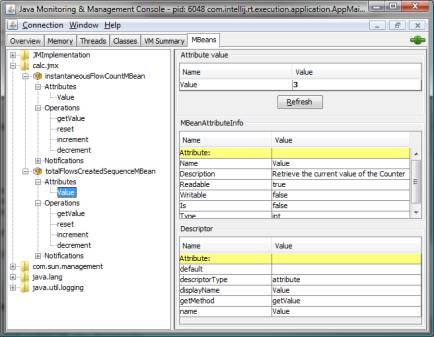

On to the actual JMX MBean. This is written in Groovy (in the directory src/groovy/calc) but I have remained fairly true to the spirit of Java (methods, not closures, for example) to be sure that JMX doesn't get too 'confused':

package calc

import org.apache.log4j.Logger

import org.springframework.jmx.export.annotation.ManagedAttribute

import org.springframework.jmx.export.annotation.ManagedOperation

import org.springframework.jmx.export.annotation.ManagedResource

@ManagedResource (description = "A simple Counter MBean")

class CounterMBean {

private static final Logger log = Logger.getLogger(CounterMBean)

private int value

CounterMBean() {

value = 0;

log.debug 'CounterMBean constructed; initial value: $value'

}

@ManagedAttribute (description = "Retrieve the current value of the Counter")

public synchronized int getValue() {

return value

}

@ManagedOperation (description = "Bump up the Counter by 1; return new value for Counter")

public synchronized int increment() {

return ++value

}

@ManagedOperation (description = "Reduce the Counter by 1; return new value for Counter")

public synchronized int decrement() {

return --value

}

@ManagedOperation (description = "Reset the Counter to 0")

public synchronized int reset() {

value = 0

return value

}

}

This MBean specifies a single attribute: 'value' and a number of operations: 'increment', 'decrement' and 'reset.' These are available to both the actual using application and to the management infrastructure.

One key point here (often overlooked, however) is that all the methods must be synchronized to prevent strange and wonderful race conditions.

Notice how operations and attributes are configured and exported via Java attributes. If you look back to the Spring configuration shown earlier, you will see the use of 'AnnotationJmxAttributeSource' to pick up and export appropriately annotated classes.

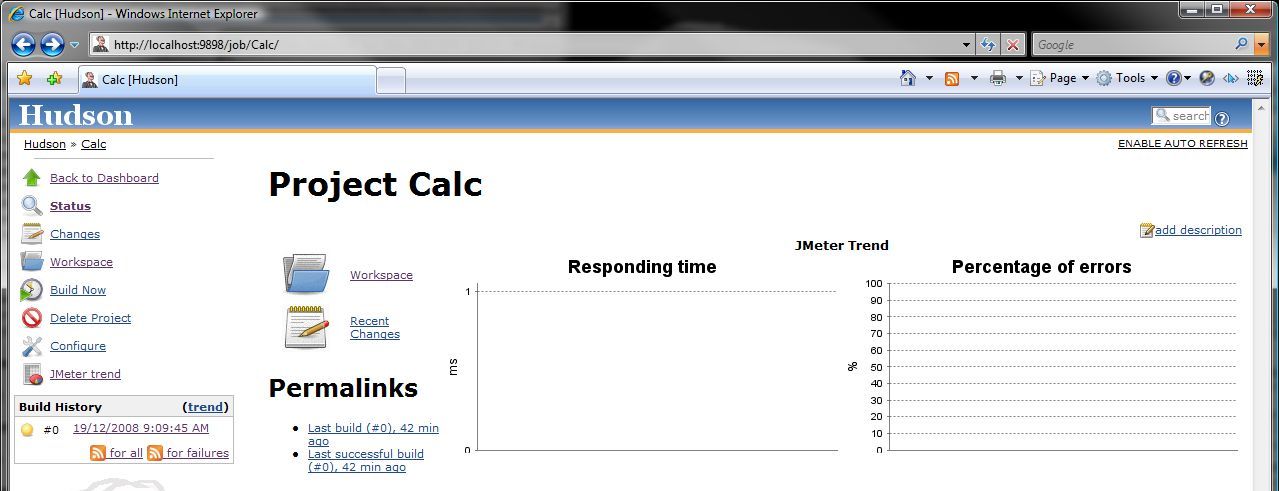

It is easy to see how this all comes together by starting up jconsole and looking for the calc.jmx ObjectName:

Adding JMX into the mix is so simple for any Spring-based application (and Grails is Spring-based, of course) that there is almost no excuse for not adding this level of monitor-ability and manage-ability to an application.

What are you waiting for? Go to it!